Study of modern image formats

What's the state of modern image formats and which one should I choose on the web now ? In this article, let's study modern image formats (AVIF, HEIF, WebP, WebP2 et JPEG XL) as precisly as possible. 🖼️

First, I'll explain how I studied these image formats. Then I'll compare the encoders performances, graph them and analyse them. Finally, on the basis of the data analysis, I'll compare the image formats and their use cases.

This article is going to be long and technical. Feel free to go straight to the analysis section, or even the conclusion.

Table of contents

- Study protocol

- Analysis of produced images

- Conclusion

Study protocol

I've put together a set of free of use images found on the web. This dataset is made up of images of very varied dimensions, sizes, origins and uses. In total, 169 images from 6 different datasets were analysed.

Each encoder converts the images by varying the speed (sometimes called effort) and quality options for each image. So for each image, 308 images were produced.

Since the formats studied all use lossy compression, it is necessary to estimate the quality lost during the encoding process. Each image produced was decoded into PNG format. This was used to calculate the structural similarity in order to estimate the loss of quality induced by the encoder. 1

For each image, the data collected from this process is as follows:

- If the encoding process was successful (encoder return code equal to 0).

- The name of the image and its dataset.

- The encoder format and the quality and effort parameters (compression speeds) used by the encoder.

- The size of the source image and the target image in order to calculate the compression ratio.

- The time taken to encode and decode the target image.

- The structural similarity measure2.

- The date on which the image was generated.

This is one of the methods for estimating the difference in perception between two images.

Expected results

I expect WebP to underperform the other formats on all counts. Also, AVIF should perform less well than WebP2. If the withdrawal of support for JPEG XL in Chrome and Firefox is anything to go by, I expect JPEG XL to perform less well than WebP2 and offer little in comparison with AVIF.

Dataset

I compiled this dataset myself. I don't own the rights to the images, but I chose royalty-free images whenever possible. I have favoured variety over freedom of use. A completely free dataset could have been put together, but it would have taken a lot more time. Although I find this ridiculous, technically I own the rights to the dataset. You are therefore free to reuse and modify it as long as you respect the original licences for the images.

You can download it from this torrent file or this magnet link 🧲.

| Dataset source | Format | Number of images | Description | License |

|---|---|---|---|---|

| low_def_imgs_set_jpg | jpg | 39 | Subset of the USC-SIPI dataset.3 | Complex but accepted that they can be used for research |

| png_random | png | 38 | A variety of PNG images from a few hours' experience on the internet. A mix of "free for personal use", CC and certainly shared with a restrictive licence. | |

| photos_imgs_set_jpg | jpg | 16 | Personal holiday and travel photos. | I own the rights and authorise use the CC-BY 2.0 ache licence. |

| high_def_imgs_set_jpg | jpg | 28 | Professional-quality, royalty-free images from the Pexels website. The attribution of each image is given in the name of the photo. | Free to use |

| selected_holliday _photos_jpg | jpg | 24 | Subset of the INRIA dataset. | Property of INRIA4. Use conditional on quoting the paper from which it is taken. |

| xkcd | png | 24 | Subset of a panel from the online comic strip XKCD. | CC-BY-NC |

Encoders

I used a specific version of the encoders, the most recent available. This improves the reproducibility of the experiment.

| Format | Transparency support | Quality interval | Effort interval | Encoder / Decoder | Version |

|---|---|---|---|---|---|

| AVIF | ✔️ | 0-100 | Option unavailable | heic-enc / heic-convert | 1.15.2 |

| HEIF | ✔️ | 0-100 | Option unavailable | heic-enc / heic-convert | 1.15.2 |

| JXL | ✔️ | 0-100 | 1-95 | cjxl / djxl | v0.8.1 (c27d4992) |

| WebP | ✔️ | 0-100 | 0-7 | cwebp / dwebp | 1.3.0 (libsharpyuv: 0.2.0) |

| WebP2 | ✔️ | 0-100 | 0-10 | cwp2 / dwp2 | 0.0.1 (b80553d) |

It should be noted that not all formats and encoders offer the same functionality. AVIF and WeBP2, for example, do not offer progressive decoding. However, I have never activated this option in the cjxl encoder.

Where possible, I activated parallel calculation, i.e. with WebP and WebP2. For WebP2 I limited the number of threads to 8, whereas WebP did not offer this option.

Similarly, the parameters between encoders are not comparable! ⚠️ So 50 quality for AVIF does not correspond to 50 quality for WebP2.

One mistake I made was to include the 100 quality of JPEG XL, which automatically activates its lossless compression mode, whereas the other encoders need an option for this.

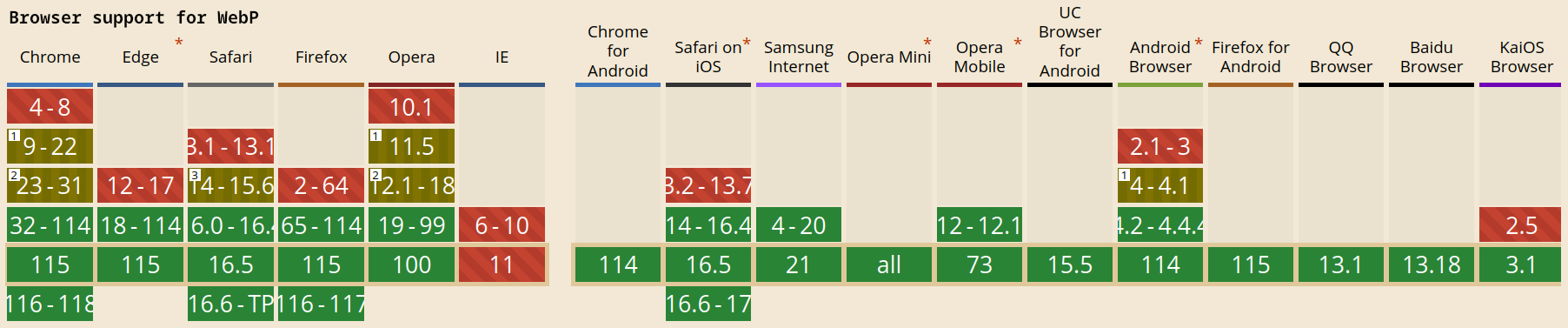

Browser supports

By august 2023, at the time of writing:

- WebP has the best support

- AVIF also has very good support, but Edge and certain other more confidential browsers are reluctant to support it.

- JXL is only supported by Safari, but support has existed on an experimental basis in Chrome and Firefox. It has now been withdrawn from Chromium. For Firefox, it is only present in nightly by activating the

image.jxl.enabledoption. - HEIF is supported only on recent version of Safari.

- WebP2 has no support of any sort.

Support for the various formats currently available on the canisuse.com website.

Features

| Format | Animation | HDR | Progressive decoding | Maximum number of channels | Maximum dimensions |

|---|---|---|---|---|---|

| WebP | ✔️ | ✖️ | ✖️ | 4 | 16 383 x 16 383 |

| AVIF | ✔️ | 10bits | ✖️ | 5 | 8193x4320 |

| JXL | ✔️ | 12bits | ✔️ | 5 | 1 703 741 823 x 1 703 741 823 |

| WebP2 | ✔️ | 10bits | 🤷 | 4099 | 16 383 x 16 383 |

AVIF supports arbitrary dimensions, but in this case the image is split into 8193x4320 blocks. No consistency is ensured at the link between these blocks, which can cause artefacts.

Is this synonymous with progressive decoding? It certainly does. Is it already implemented? I don't know.

Analysis of produced images

We will first analyse the errors and then the performance of each encoder.

Encoding errors 🐛 🐞

There are two types of error:

- When the encoding process crashes or fails, returning an error code other than 0.

- When, at the end of the encoding process, the generated image is particularly different from the source image. I will arbitrarily set a threshold of

0.4for similarity2, so any image with a higher DSSIM threshold will be considered to be in error.

heic-enc to encode AVIF and HEIF

The heic-encoder has a problem managing EXIF data, and the image is not returned correctly.

As a result, the calculation of structural similarity could not work, as the original and produced images do not have the same dimensions. This error affects the AVIF and HEIF formats. In order to deal with this case, the EXIF data has been removed from all the images. This should not affect the size of the image produced. As this error has been circumvented, it has not been counted as an error.

Also on some PNGs the image produced was particularly different from the source image.

This seems to be a problem in decoding the source image, since both formats are affected. In addition, the image produced was recognisable but badly damaged. 9 source images were affected, and the error was systematic for each image produced from these source images.

cjxl encoder

The jxl encoder is still successful but the decoding step has failed 3 times.

This suggests that jxl has not produced a valid image. In a way, this is the worst possible bug. Testing with another JPEG XL decoder implementation, we get a more explicit "Unexpected end of file" error.

There were also 3 images generated that are particularly different from the source image.

However, unlike the heic-encoder, this error only occurs when images are encoded with a quality of 100 and a level 1 effort, called lightning ⚡. The 3 images affected by this bug are unrecognisable but curiously, the set of colours used is recognisable. It should be noted that these 3 images were also images that were not faithfully represented by heic-enc. It's unlikely to be a coincidence, but it's possible.

cwebp and cwp2 encoders

The WebP encoder fails on 6 images and the WebP2 encoder fails on 1 image.

The WebP2 encoding error is clear: WP2_STATUS_BAD_DIMENSION. In fact, the image in question is particularly large. This is the only error.

The WebP encoder fails on the same image, for the same reason, but also on 5 other images. In these cases the error returned is :

Saving file 'test_crash.webp'

Error! Cannot encode picture as WebP

Error code: 6 (PARTITION0_OVERFLOW: Partition #0 is too big to fit 512k.

To reduce the size of this partition, try using less segments with the -segments option, and eventually reduce the number of header bits using -partition_limit. More details are available in the manual (`man cwebp`)

Given that the error only occurs with quality at 90 or 100 and effort at 0 or 1 simultaneously, I consider this to be a borderline case that the encoder has difficulty handling. I didn't investigate further.

I note that, for these two encoders, there is no error of too low similarity between the image produced and the source image. This is a very good thing. 👌

Conclusion of the study of errors

Only the JPEG XL format fails to encode images and produces glitches. The PNG image decoding part of heic-enc needs to be improved, as it has very many bugs which also produce glitches. The WebP and WebP2 formats have problems with the limited dimensions supported by the format. WebP has a bug in its official implementation in certain limited cases.

From this point, we will exclude cases with errors from our statistics. As a result, AVIF and HEIF will have 9 fewer images. JPEG XL, WebP and WebP2 will have no data corresponding to certain parameters.

The bugs encountered by JPEG XL have now been resolved but the fix is not yet available in the latest version released. I warned about the heif-encoding bug and was fixed promptly. I didn't do the same for WebP, as the process requires a Google account.

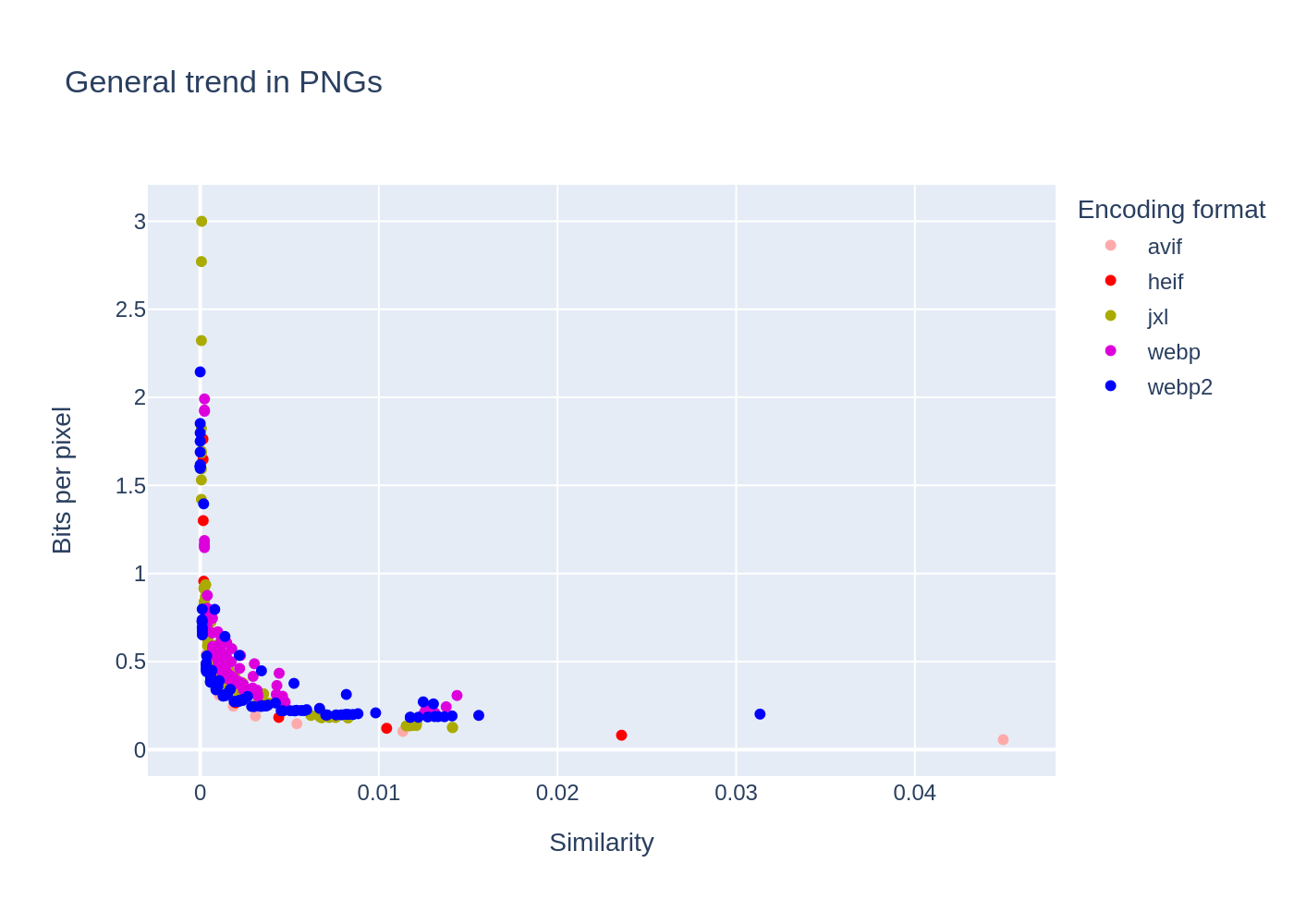

Compression rate compared to the quality of the image produced

For ease of comparison, I'm going to comparison by dataset. It turns out that many images in the same dataset follow the same trends. So I'm going to try and list the different trends.

Low definition images

Within the low_def_imgs_set_jpg dataset there are 3 trends. A small set of images where the AVIF and HEIF formats do particularly badly, 3 images where the variation in performance is very large and the rest where all the image formats have roughly equivalent performance.

In around 13 cases, the AVIF and HEIC formats performed less well than all the others. The maximum similarity achieved is far from 0. Looking at the images, it does not seem that the images concerned have anything in common.

This poor performance can be explained either by a decoding problem or by the fact that the algorithms are not effective on these images.

On 3 images, it's clear that the results are very varied. The performance of each format depends very much on the effort and quality configured. Despite the wide variations, there is a clear segmentation where WebP2 clearly outperforms its competitors. AVIF and HEIC are OK, but JPEG XL lags behind WebP.

The images concerned are clearly greyscale test images.

Finally, for the rest of the low-resolution images, the performance of all the image formats is more or less identical. Only WebP lags slightly behind.

Random PNGs

Here, it is not easy to define a trend. Generally speaking, AVIF is equivalent to WebP2 and both do better than JPEG XL. WebP is generally pretty bad, but it's not uncommon for it to equal JXL in performance. But this is only shown as clearly as in some graphs.

However, the slight dominance of AVIF over HEIF is clear in this data set. Also, on a fairly large number of images, the AVIF and HEIF formats manage to reduce the size of the image by reducing its quality, whereas the other algorithms reach a threshold (each with a different threshold) that they are unable to exceed.

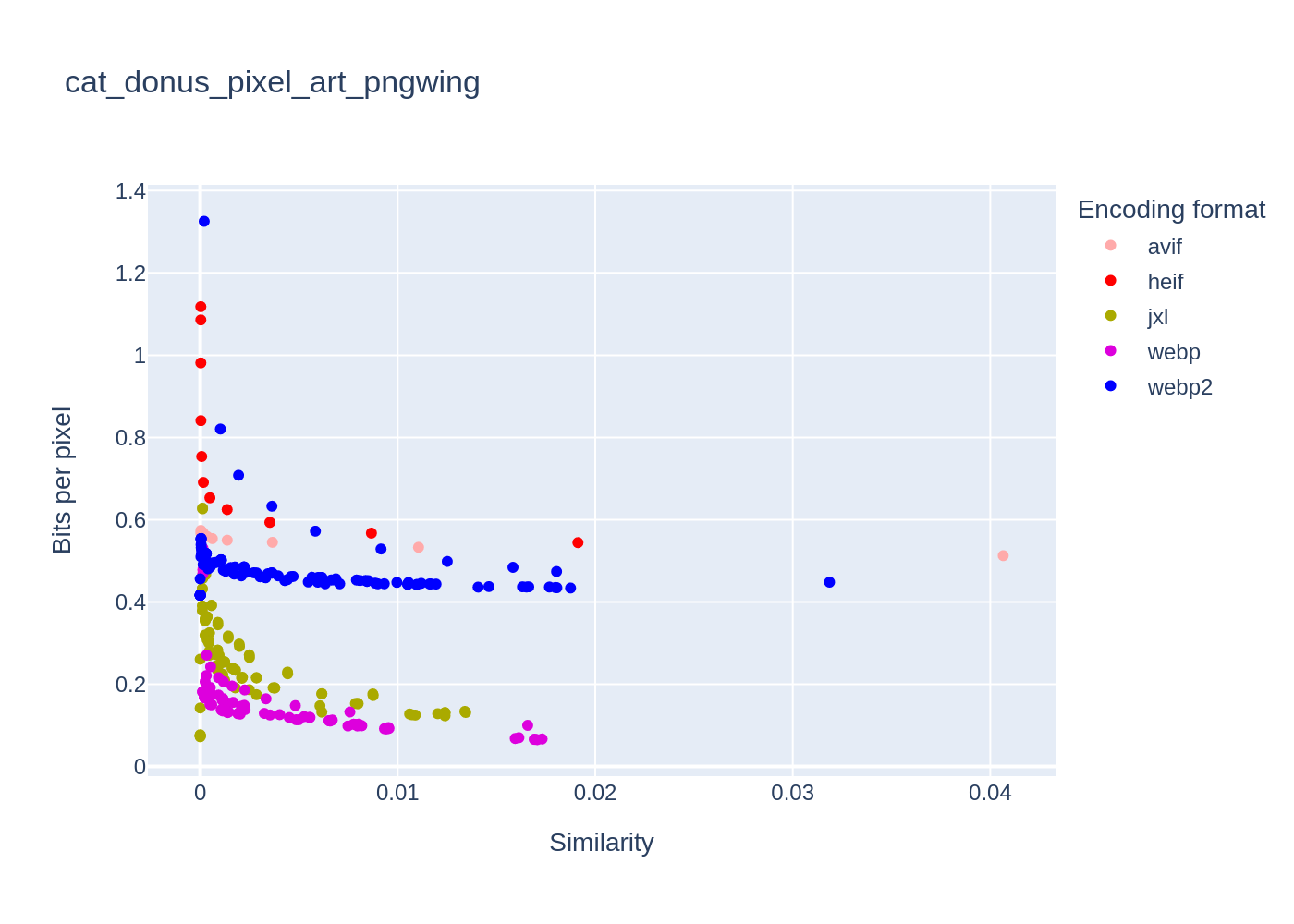

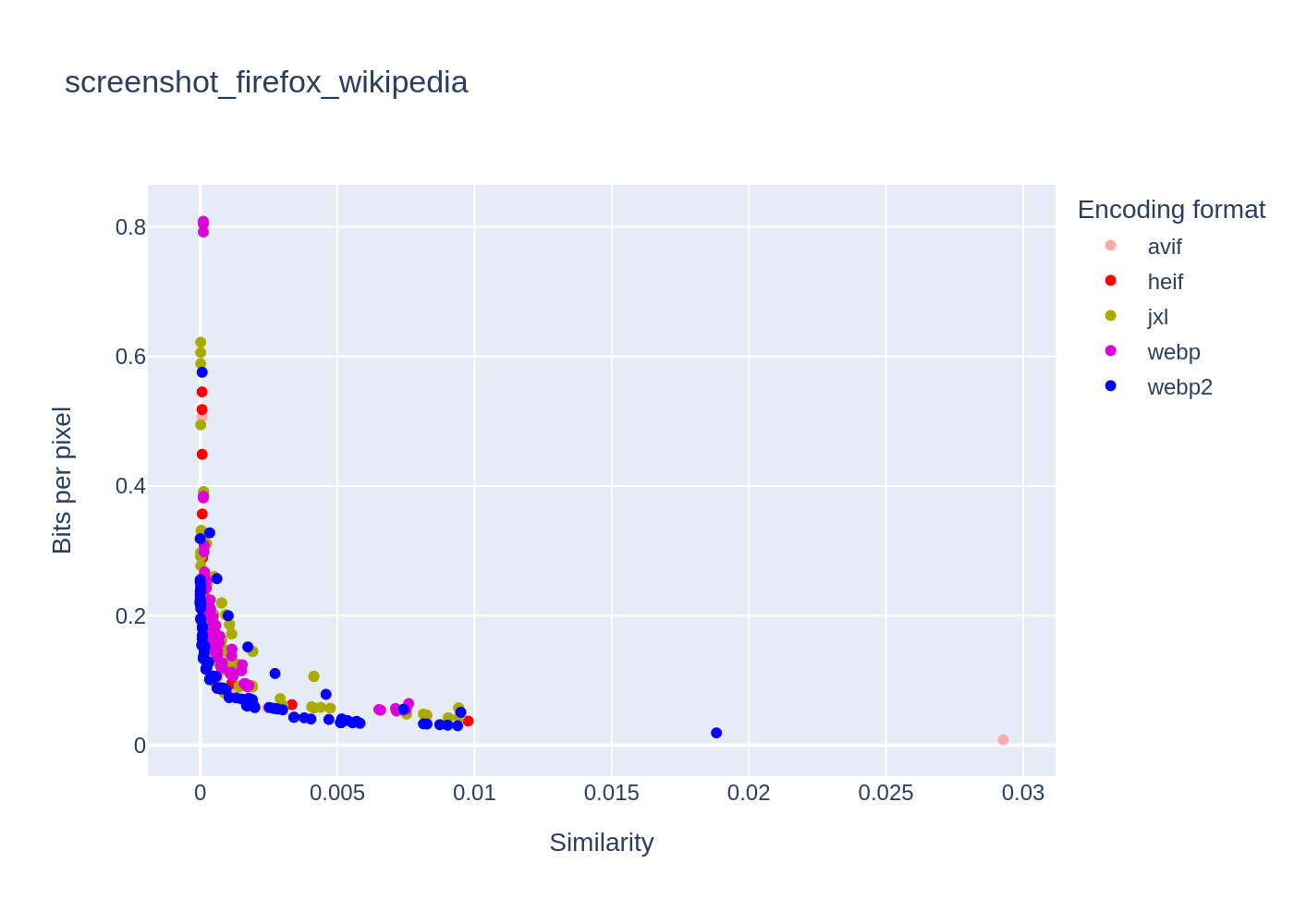

Some images (👽) have a very unusual graph!

This is the case with cat_donus_pixel_art where JPEG XL is very good at 100 quality (lossless) but does slightly less well than WebP otherwise. WebP also gives very good results, whereas WebP2 has difficulty optimising image size. AVIF gives very poor results, while HEIF systematically increases image size (except at quality 0).

Also on large, highly detailed images, JPEG XL can become interesting. This is the case, for example, on the screenshot image of the home page of https://wikipédia.org where JPEG XL becomes as interesting as WebP2, itself better than AVIF. JPEG XL performs better on large images (in size) such as eiffel_tower_pngwing, screenshot_firefox_c_quirks_en_ache_one.

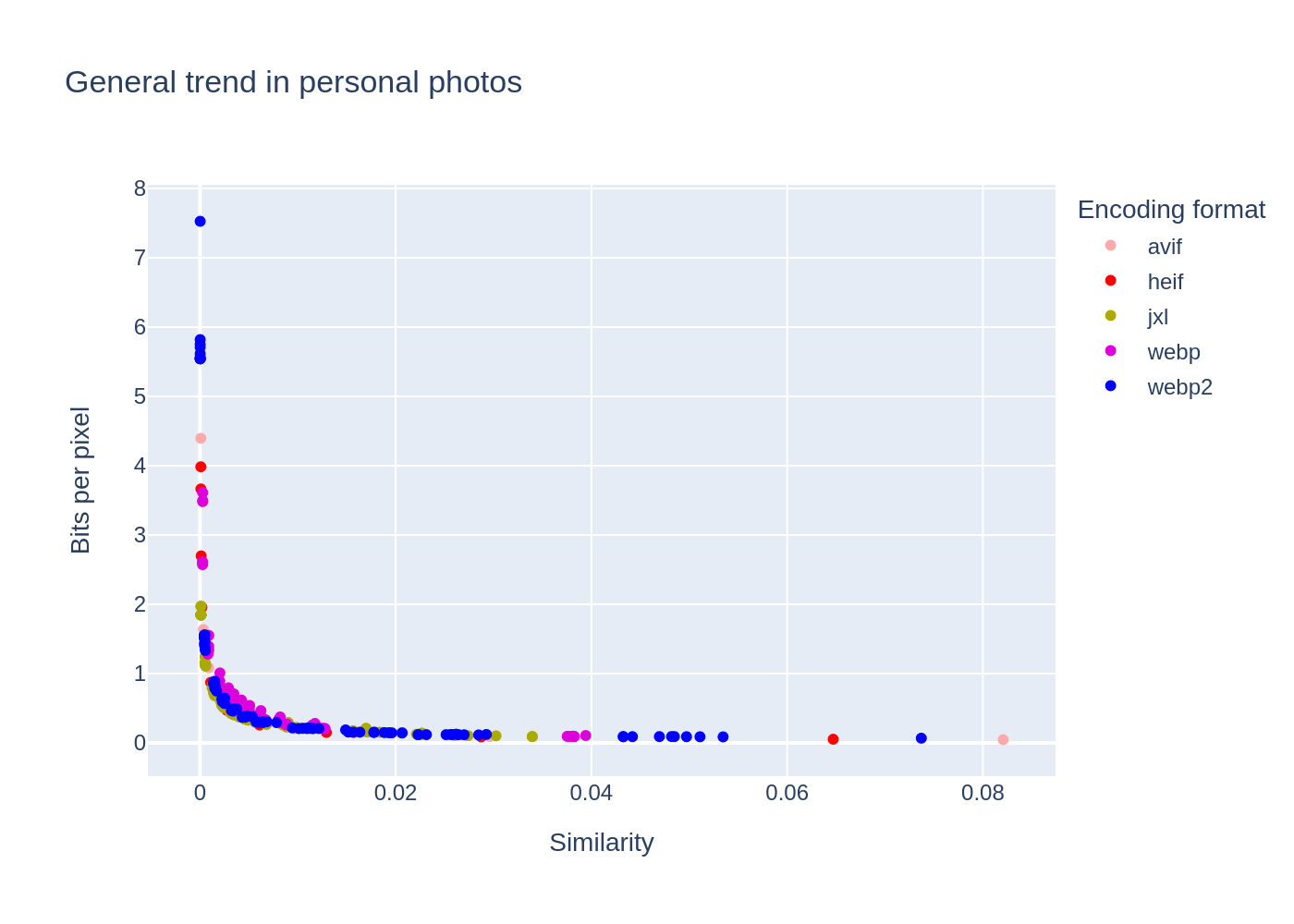

Personal holiday photos

The trend is very clear in this dataset. All formats except WebP are excellent.

Note the mediocre performance of WebP2 when the quality is 100%. Almost ✕ 6 on the nantes_église image and almost ✕ 5 on sleeping_bridge_beauty. AVIF suffers from the same problem on 100 and 90 quality but not to such an extent. JPEG XL never encounters this problem.

High definition images

The trend is identifiable. All formats except WebP are excellent. We can even rank JPEG XL first, AVIF/HEIF second and WebP2 third. It should be noted that this ranking is not true for all images. Also, all three formats have very good results, so the difference between WebP2 and JPEG XL is not that noticeable.

Broadly the same trend as the personal holiday photo dataset. The performance achieved is on average better, however.

No image stands out from the crowd, except for WebP2 performance at 100% quality and effort 0. An initial size multiplied by 8.5!

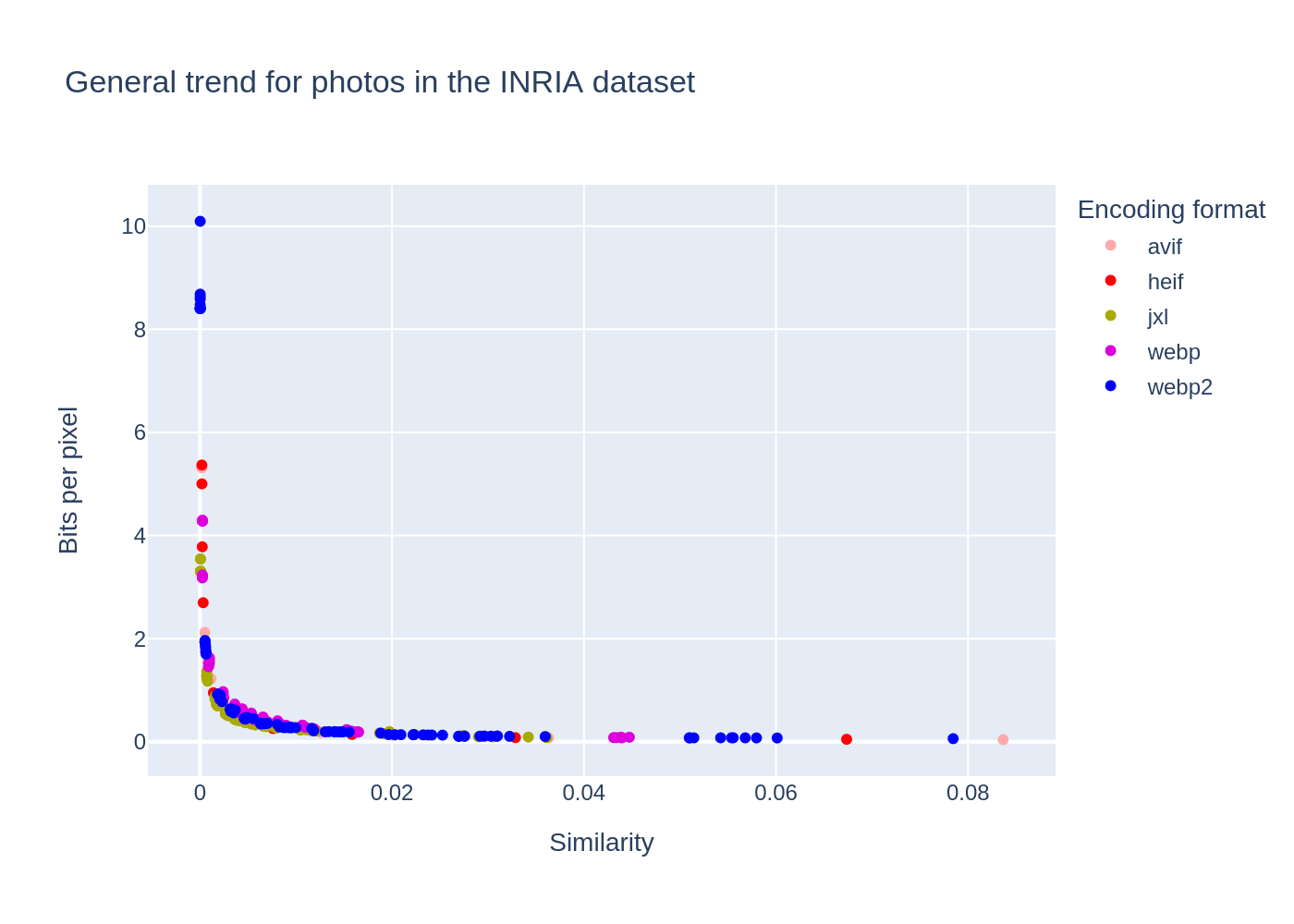

Photos of the INRIA dataset

Same analysis than the two previous datasets. 🤷

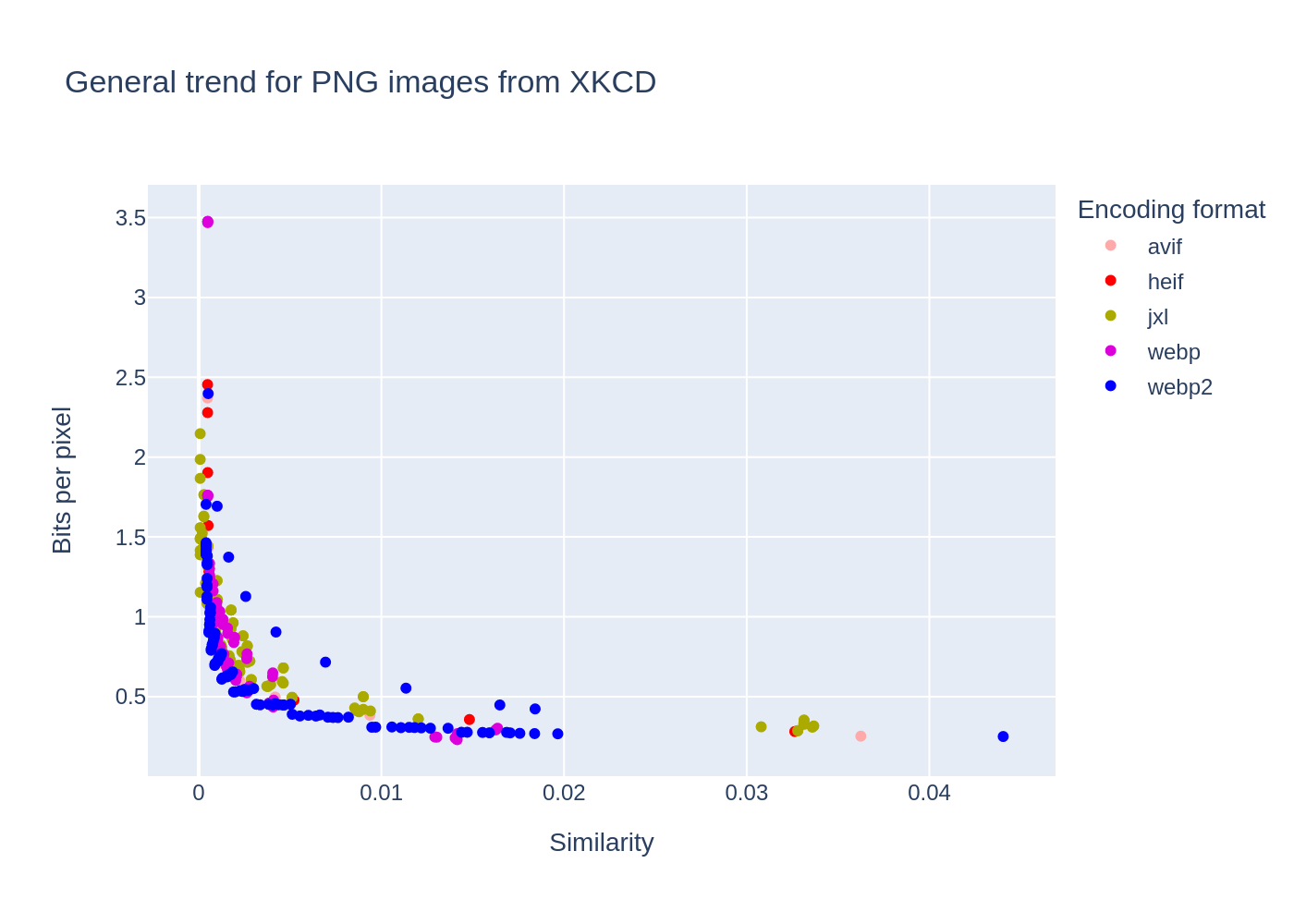

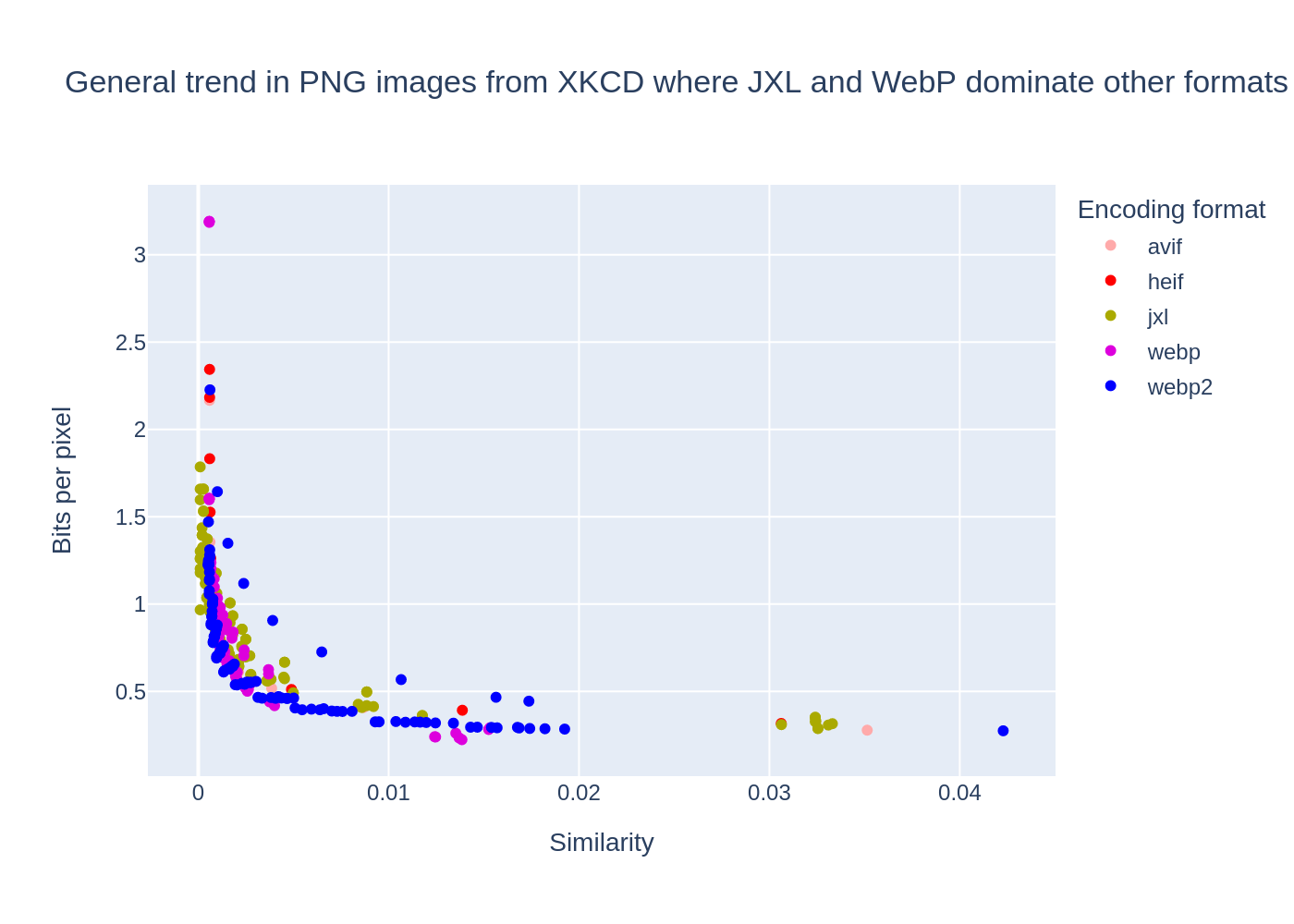

PNG images from XKCD website

Very poor results for JPEG XL, worse overall than WebP, except on one image, where it excels in lossless mode. (1416.jpg). WebP2 does well. AVIF/HEIF is also quite equivalent. There are two trends.

The first, and most common, is the dominance of WebP2 and AVIF/HEIF (equivalent) over the other formats. However, WebP2 performs better on certain images (136, 209, 399, 695, 731, 1071). What these images have in common is that they have colours, often a lot of colours. But there are some colour images where the performance gain is not significant.

The second, which can be seen in images 1123, 1144 and to a lesser extent 376, 1163, 1195 and 1445, is that JXL is better than the others when the quality is good and WebP is better than the others when the quality is poor. This trend was difficult to predict. What these images have in common is their small size.

One exception is image 1416, which caused the heic-enc encoder to bug.

- It was very hard to compress for the various encoders.

- WebP does not have a single image smaller than the original.

- JXL needs a quality of 100 to produce images smaller than the original.

- WebP2 has very good results at 100 quality, otherwise it doesn't achieve much, even if it sometimes manages to make the image lighter than the original.

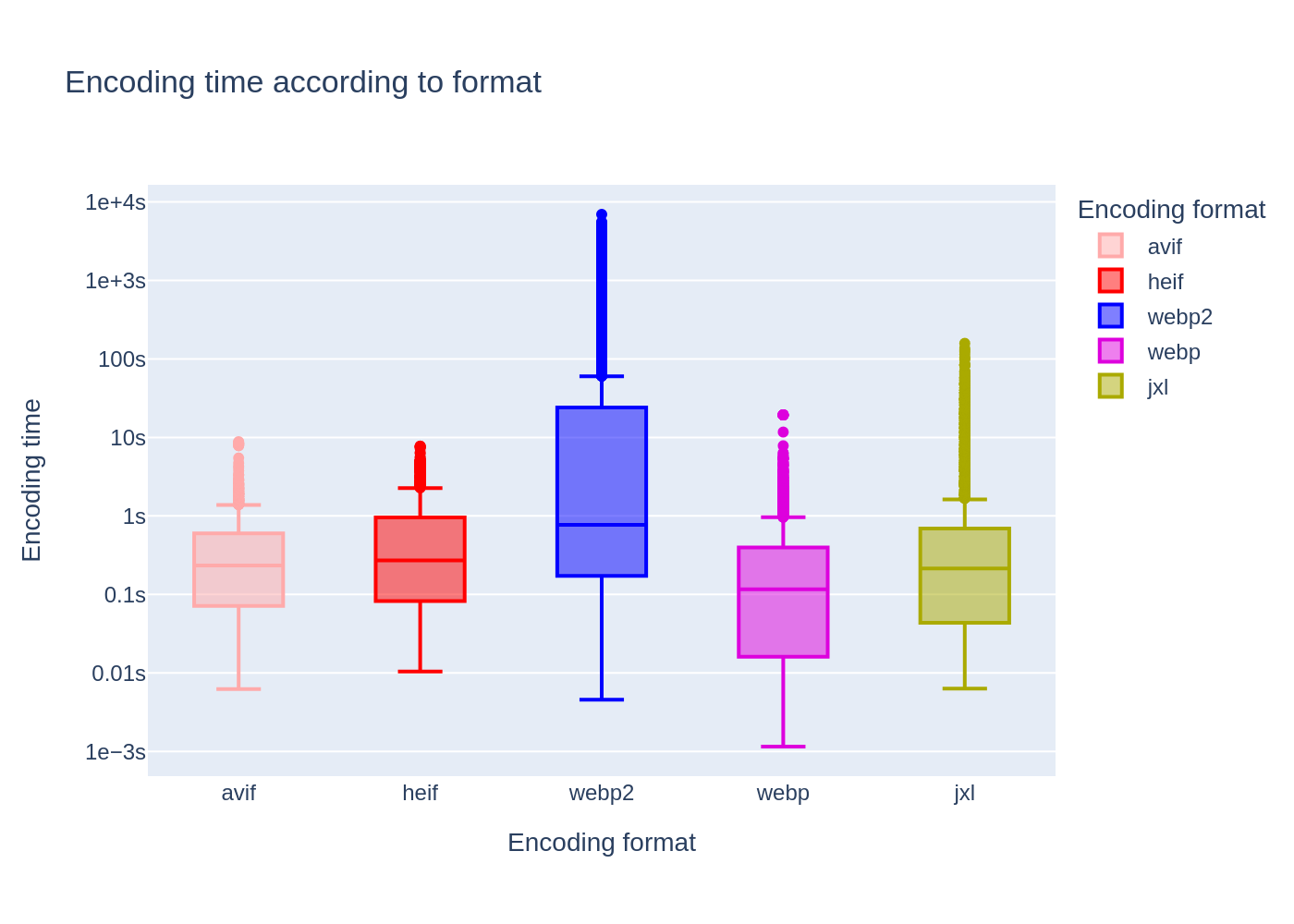

Overall encoder speed

For the same quality, the encoders take very different times. Here, taking the AVIF format points as a basis and comparing them with the similarity and similar ratio points, we obtain this graph:

The scale is logarithmic in order to display all the data on the graph.

WebP is the fastest format overall. Next come AVIF and HEIF, but JPEG XL is very close. It is even faster on average. The anomaly here is WebP2, which is much slower overall (note that the scale is logarithmic ...)

AVIF and HEIF are the most consistent, being the only encoders to encode all images in less than 10s. JPEG XL took up to 2 minutes to encode certain images, while WebP2 sometimes took 2 hours to encode an image!

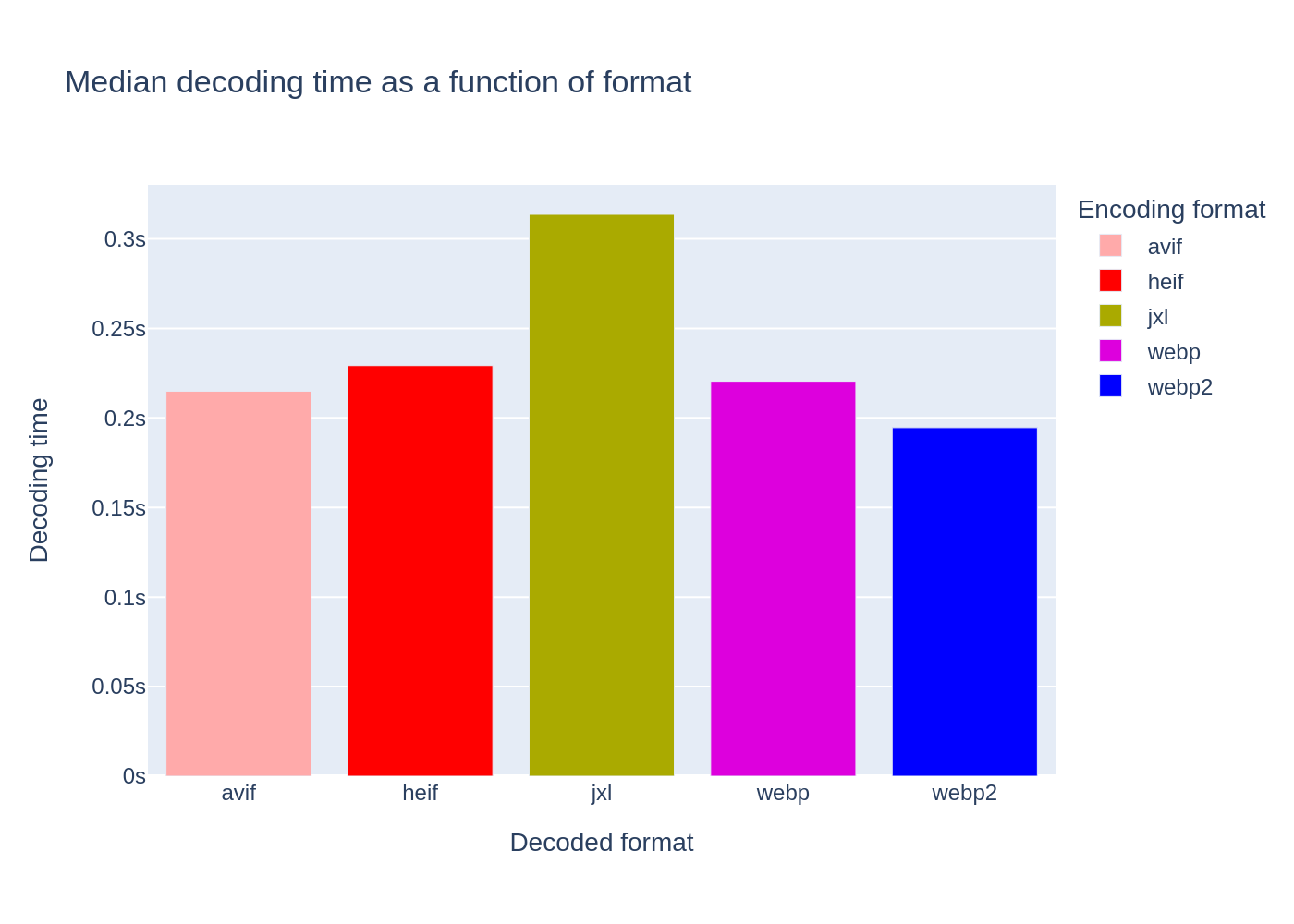

Study of decoding time

I looked at the decoding times for the different formats, but they're all pretty much the same. All images are decoded in 10s maximum by all image formats.

This result is quite surprising. It would not be surprising if an error made it way in these measurements and if, for example, it was the time taken to write to disk that was measured here.

The most likely scenario is that the PNG encoding time is of a higher order than the decoding time for the various formats.

A slight tendency for JPEG XL to be slower than AVIF and WebP2 to be faster than AVIF.

Here, I have not enabled progressive decoding of JPEG XL. It would have been interesting to see whether progressive decoding of JPEG XL would have resulted in a faster display.

A look back at the study 👀

I think this study can be improved. I plan to repeat this comparison in July 2024 and would like to list the points for improvement.

Improve reproducibility with a Docker image.

Compare equal BPP (time/quality) and equal quality (BPP and time). This is particularly complicated, but it can be done.

Study more encoders. Here, I have made the amalgam encoder ≈ format, but it is true that the quality of the encoder plays a particular role in the representation of a format.

Use several image quality metrics.

Include the BPG (Better Portable Graphic) format for comparison.

I wanted to compare only lossy image encodings. Except that JPEG XL at quality 100 is automatically a lossless format. ❌ Next time I'd like to include the lossless versions of the different encoders.

Check the validity of the decoding times.

Conclusion

Note that in all cases AVIF, WebP2 and JPEG XL are generally more interesting than JPEG or PNG.

Taking into account current browser support (August 2023). Only use AVIF if Edge (or QQ Browser) is not essential for you. If you can, use the [picture] tag (https://developer.mozilla.org/fr/docs/Web/HTML/Element/picture) to prepare for future image formats. You can then offer AVIF to those who support it, otherwise use WebP6.

Taking into account only the qualities and shortcomings of the various encoders, for example for storage purposes, or in the future when these formats are supported by the browser.

JPEG XL should be used for all photographs. For PNGs, it is difficult to choose between AVIF and WebP2 in the general case, choose WebP2 with an effort of 6 and a quality of 90 or AVIF with a quality between 80 and 90. If you process your images manually, test AVIF and WebP2 and see which one best matches your data. If you do automatic processing, preferably use WebP2, but bear in mind that AVIF is also a very good candidate, especially if you are constrained by encoding time or CPU load or if you have images of arbitrarily large dimensions.

So what I'm observing contradicts other studies on the subject. This may be due to the encoders progress. In fact, libjxl and libheif are in active development while libwp2 is in pause. Despite the encoder's maturity, it seems that WebP2 will not go beyond the experimental stage.

Additional Resources

- The torrent file (magnet link 🧲).

- The raw data.

The implementation (in Rust) used is made by Kornel, whom I thank. ↩

Technically, a dissimilarity calculation. Here, we are looking for structural similarities between two images using the [SSIM] algorithm (https://fr.wikipedia.org/wiki/Structural_Similarity). This is one of the methods for estimating the difference in perception between two images. ↩ ↩2

Low-resolution images, mainly from the misc category. ↩

From the paper "Hamming Embedding and Weak geometry consistency for large scale image search", presented at the 10ᵉ European Computer Vision Conference in October 2008. ↩

Each level of effort has a textual representation and I find that very funny.

↩Niveau Textuelle 1 lightning 2 thinder 3 falcon 4 cheetah 5 hare 6 wombat 7 squirrel 8 kitten 9 Tortoise Or PNG if you need to support very old browsers. ↩